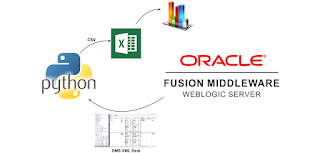

Oracle provides the Dynamic Monitoring Service (DMS) as part of WebLogic Server which is extremely useful if you want to obtain aggregated data of an environment in case of for example a performance test. The data which can be obtained from DMS is extensive. This varies from average duration of service calls to JVM garbage collects to datasource statistics. DMS can be queried with WLST. See for example here. On example script based on this can be found here. You can also directly go to a web-interface such as: http://<host>:<port>/dms/Spy. The DMS Spy servlet is by default only enabled on development environments but can be deployed on production environments (see here).

Obtaining data from DMS in an automated fashion, even with the WLST support, can be a challenge. In this blog I provide a Python 2.7 script which allows you to get information from the DMS and dump it in a CSV file for further processing. The script first logs and uses the obtained session information to download information from a specific table in XML. This XML is converted to CSV. The code does not require an Oracle Home (it is not WLST based). The purpose here is to provide an easy to use starting point which can be expanded to suit specific use-cases. The script works against WebLogic 11g and 12c environments (has been tested against 11.1.1.7 and 12.2.1). Do mind that the example URL given in the script obtains performance data on webservice operations. This works great on composites but not on Service Bus or JAX-WS services. You can download a general script here (which requires minimal changes to use) and a (more specific) script with examples of how to preprocess data in the script here.

Articles containing tips, tricks and nice to knows related to IT stuff I find interesting. Also serves as online memory.

Monday, December 19, 2016

Sunday, November 20, 2016

Oracle Service Bus: A quickstart for the Kafka transport

As mentioned on the following blog post by Lucas Jellema, Kafka is going to play a part in several Oracle products. For some usecases it might eventually even replace JMS. In order to allow for easy integration with Kafka, you can use Oracle Service Bus to create a virtualization layer around Kafka. Ricardo Ferreira from Oracle's A-Team has done some great work on making a custom Kafka Service Bus transport available to us. Read more about this here: http://www.ateam-oracle.com/

Friday, October 28, 2016

Sonatype Nexus 2.x: Using the REST API to clean-up your repository

Sonatype provides Nexus. An extensive artifact Repository Manager. It can hold large amounts of stored artifacts and still requests get processed quickly. Also it has an extensive easy to use API which is a definite asset. When a project has been running for a longer period (say years), the repository often gets filled with large numbers of artifacts. This can become especially troublesome if artifacts are quite large in size such as for example JSF EAR files. These artifacts might not even have been released (be part of a deployed release). Nexus provides the option to remove artifacts older than a specific date. This however might also remove artifacts which are dependencies of other artifacts (older releases) which you might want to keep. When those other artifacts are build, the build might break because the artifacts it refers to, have been removed. In order to allow more fine grained control over what to remove, I've created the following script. The script uses only the releases repository (snapshots are not taken into account. not sure what the script does there) Disclaimer: first test if this script does what you want in your situation. It is provided as is without any warranties.

Labels:

api,

clean,

clean-up,

cleanup,

maintenance,

nexus,

nexus 2,

python,

sonatype nexus,

storage

Thursday, October 27, 2016

Sonatype Nexus 3.0. Using the new Groovy API

Sonatype Nexus 3.0 does not have the REST API which was available in Nexus 2.x (see the discussion here). This provides a challenge in case you want to automate certain tasks. Nexus 3 does provide a Groovy API however which allows you to write your own scripts and upload them to Nexus. You can then call your scripts and use the JSON result. In order to get this working however, several things need to be done. First the script needs to be developed (during which code completion comes in handy). Next the script needs to be condensed to a single line and put in a JSON request. After that, the JSON request needs to be send to a specific endpoint. You can imagine this can be cumbersome. Sonatype has provided Groovy scripts to deploy their Groovy scripts. See here. I've created something similar using Python so you do not require a download of dependencies, a JVM and a Groovy installation to perform this task. This makes it more easy to do this from for example a build-server.

Monday, October 3, 2016

Oracle NoSQL Database 4.x and the Node.js driver 3.x

There are two ways you can access Oracle NoSQL database from a Node.js application. These are illustrated below. You can use the nosqldb-oraclejs driver and you can use Oracle REST Data Services.

In my previous blog post I illustrated how you can access Oracle NoSQL database by using the nosqldb-oraclejs driver. I encountered an issue when using the NoSQL database version 12R1.4.0.9 with the currently newest available Node.js driver for NoSQL database nosqldb-oraclejs 3.3.15.

In my previous blog post I illustrated how you can access Oracle NoSQL database by using the nosqldb-oraclejs driver. I encountered an issue when using the NoSQL database version 12R1.4.0.9 with the currently newest available Node.js driver for NoSQL database nosqldb-oraclejs 3.3.15.

Wednesday, August 17, 2016

Node.js and Oracle NoSQL Database

Oracle NoSQL Database is an interesting option to consider when you want a schemaless, fast, scale-able database which can provide relaxed (eventual) consistency. Oracle provides a Node.js driver for this database. In this blog I'll describe how to install Oracle NoSQL database and how to connect to it from a Node.js application.

The Node.js driver provided by Oracle is currently in preview version 3.3.7. It uses NoSQL client version 12.1.3.3.4 which does not work with 4.x versions of NoSQL database, so I downloaded Oracle NoSQL Database, Enterprise Edition 12cR1 (12.1.3.3.5) from here (the version number was closest to the version number of the client software).

The Node.js driver provided by Oracle is currently in preview version 3.3.7. It uses NoSQL client version 12.1.3.3.4 which does not work with 4.x versions of NoSQL database, so I downloaded Oracle NoSQL Database, Enterprise Edition 12cR1 (12.1.3.3.5) from here (the version number was closest to the version number of the client software).

Saturday, August 13, 2016

Application Container Cloud: Node.js hosting with enterprise-grade features

Oracle's Application Container Cloud allows you to run Java SE, Node.js and PHP applications (and more is coming) in a Docker container hosted in the Oracle Public Cloud (OPC). Node.js can crash when applications do strange things. You can think of incorrect error handling, blocking calls or strange memory usage. In order to host Node.js in a manageable, stable and robust way in an enterprise application landscape, certain measures need to be taken. Application Container Cloud provides many of those measures and makes hosting Node.js applications easy. In this blog article I'll describe why you would want to use Oracle Application Container Cloud. I'll illustrate this with examples of my experience with the product.

Thursday, August 11, 2016

Node.js: My first SOAP service

I created a simple HelloWorld SOAP service running on Node.js. Why did I do that? I wanted to try if Node.js was a viable solution to use as middleware layer in an application landscape. Not all clients can call JSON services. SOAP is still very common. If Node.js is to be considered for such a role, it should be possible to host SOAP services on it. My preliminary conclusion is that it is possible to host SOAP services on Node.js but you should carefully consider how you want to do this.

I tried to create the SOAP service in two distinct ways.

I tried to create the SOAP service in two distinct ways.

- xml2js. This Node.js module allows transforming XML to JSON and back. The JSON which is created can be used to easily access content with JavaScript. This module is fast and lightweight, but does not provide specific SOAP functionality.

- soap. This Node.js module provides some abstractions and features which make working with SOAP easier. The module is specifically useful when calling SOAP services (when Node.js is the client). When hosting SOAP services, the means to control the specific response to a call are limited (or undocumented)

Using both modules, I encountered some challenges which I will describe and how (and if) I solved them. You can find my sample code here.

Tuesday, August 9, 2016

Node.js: A simple pattern to increase perceived performance

The asynchronous nature of code running on Node.js provides many interesting options for service orchestration. In this example I will call two translation services (Google and SYSTRAN). I will call both of them quickly after each other (milliseconds). The first answer to be returned, will be the answer returned to the caller. The second answer will be ignored. I've used a minimal set of Node modules for this; http, url, request. Also I wrapped the translation API's to provide a similar interface which allows me to call them with the same request objects. You can download the code here. In the below picture this simple scenario is illustrated. I'm not going to talk about the event loop and the call stack. Watch this presentation for a nice elaboration on those.

Wednesday, July 20, 2016

Oracle SOA Suite Code Quality: SonarQube Quality Gates, XML Plugin and custom XPath rules

There are several ways to do code quality checks in SOA Suite. In this blog post I will describe a minimal effort setup which uses Jenkins 2.9, SonarQube 5.6 and the SonarQube XML Plugin 1.4.1. SonarQube is a popular tool to check and visualize code quality. An XML Plugin is available for SonarQube which allows you to define custom XPath rules. At the end of this post I will shortly describe several other options which you can consider to help you improve code quality by doing automated checks.

Using SonarQube and the XML Plugin to do code quality checks on SOA Suite components has several benefits compared to other options described at the end of this post.

Using SonarQube and the XML Plugin to do code quality checks on SOA Suite components has several benefits compared to other options described at the end of this post.

- It is very flexible and relatively technology independent. It allows you to scan any XML file such as BPEL, BPMN, OSB, Mediator, Spring, composite.xml files

- It requires only configuration of SonarQube, the SonarQube XML Plugin and the CI solution (Jenkins in this example)

- It has few dependencies. It does not require an Oracle Home or custom JAR files on your SonarQube server.

- The XML Plugin has support (by SonarSource) so high probability it will still work in future versions of SonarQube.

- Writing rules is simple; XPath expressions. it does not require you to write Java code to create checks.

Thursday, June 9, 2016

Seamless source "migration" from SOA Suite 12.1.3 to 12.2.1 using WLST and XSLT

When you migrate sources from SOA Suite 12.1.3 to SOA Suite 12.2.1, the only change I've seen JDeveloper do to the (SCA and Service Bus) code is updating versions in the pom.xml files from 12.1.3 to 12.2.1 (and some changes to jws and jpr files). Service Bus 12.2.1 has some build difficulties when using Maven. See Oracle Support: "OSB 12.2.1 Maven plugin error, 'Could not find artifact com.oracle.servicebus:sbar-project-common:pom' (Doc ID 2100799.1)". Oracle suggests updating the pom.xml of the project, changing the packaging type from sbar to jar and removing the reference to the parent project. This however will not help you because the created jar file does not have the structure required of Service Bus resources to be imported. To deploy Service Bus with Maven I've used the 12.1.3 plugin to create the sbar and a custom WLST file to do the actual deployment of this sbar to a 12.2.1 environment. A similar solution is described here.

Updates to the pom files can easily be automated as part of a build pipeline. This allows you to develop 12.1.3 code and automate the migration to 12.2.1. This can be useful if you want to avoid keeping separate 12.1.3 and 12.2.1 versions of your sources during a gradual migration. You can do bug fixes on the 12.1.3 sources and compile/deploy to production (usually production is the last environment to be upgraded) and use the same pipeline to compile and deploy the same sources (using altered pom files) to a 12.2.1 environment.

Updates to the pom files can easily be automated as part of a build pipeline. This allows you to develop 12.1.3 code and automate the migration to 12.2.1. This can be useful if you want to avoid keeping separate 12.1.3 and 12.2.1 versions of your sources during a gradual migration. You can do bug fixes on the 12.1.3 sources and compile/deploy to production (usually production is the last environment to be upgraded) and use the same pipeline to compile and deploy the same sources (using altered pom files) to a 12.2.1 environment.

Tuesday, June 7, 2016

Oracle Database 11g: Virtual database columns

Views in the Oracle database have several uses. You can use them to provide a view of data in different tables as a single object to query. You can use views to achieve a virtualization layer. Also views can be used to provide a user specific view of data. Implementing views however also have some challenges if you want to 'do it right'. You should consider grants to the table and the view. Maybe create synonyms. You should also consider what will happen if someone does access the underlying table since your data can now be queried from a different place (no single source of truth anymore). Do you want to have the view implement similar functionality as a table by providing an instead-of trigger when performing inserts on the view? Sometimes a view might seem too much for what you might want to accomplish. Suppose you want to add a single calculated field to a table. In this case there is a much easier solution than creating a view. A virtual column. The virtual column was introduced in Oracle Database 11g. In this blog post I'll give a simple minimal example of how you can use a virtual column and some things to mind when doing. Disclaimer: this code will not conform to many standards and is only meant as a minimal example.

Saturday, May 28, 2016

Integration Cloud Service (ICS): Execution Agent proxy issue: NumberFormatException

Integration Cloud Service (ICS) offers an Execution Agent which you can download and install on-premises. This provides a local ICS instance. The Execution Agent is useful in several situations. When you have an ICS trial, it is valid only for a period of 30 days. After initial installation (which does require an ICS subscription), you can use the Execution Agent indefinitely. Secondly, you have full control over the Execution Agent since it is a local installation and not managed by Oracle such as the Oracle Cloud instances. This means you can for example log all requests and replies, install and test a custom Cloud Adapter or browse the Service Bus log files and deployments in case something goes wrong. Currently this is not possible in the Oracle Public Cloud without creating SR's. This blog post is based on the below version of ICS and might not be valid in future versions.

You can download the Execution Agent from the Agents page:

The installation requires Oracle Enterprise Linux 6 UC4 or above. Read the documentation here.

Monday, April 11, 2016

My first NodeJS service

Microservices implemented in JavaScript running on NodeJS are becoming quite popular lately. In order to gain some experience with this, I created a little in memory NodeJS cache service. Of course statefulness complicates scalability, but if I would also have implemented a persistent store to avoid this, the scope of this blog article would have become too large. Please mind that my experience with NodeJS is limited to a NodeJS workshop from Lucas Jellema and a day of playing with NodeJS. This indicates it is quite easy to get started. In this blog I'll highlight some of the challenges I encountered and how I solved them. Also I'm shortly describing what Oracle is doing with NodeJS. Because the JavaScript world changes rapidly, you should also take into account the period between when this blog is written and when you are reading it; it will most likely quickly become outdated. You can download the code from GitHub here.

Wednesday, March 23, 2016

Oracle Integration Cloud Service (ICS): A developer's first impression

Oracle provides ICS (Integration Cloud Service) as a simple means for citizen developers to do integrations in the cloud and between cloud and on-premises. On the Oracle Fusion Middleware Partner Community Forum I got a chance to get some hand-on experience with this product in one of the workshops. In this blog post I will describe some of my experiences. I'm not the target audience for this product since I am a technical developer and have different requirements compared to a citizen developer. I've not been prejudiced by reading the documentation ;)

I experimented with ICS on two use-cases. I wanted to proxy SOAP and REST requests. For the SOAP request I used a SOA-CS Helloworld web-service and for the REST request I used an Apiary mockservice. I will not go into basics too much such as creating a new Connection and using the Connection in an Integration since you can easily learn about those in other places.

I experimented with ICS on two use-cases. I wanted to proxy SOAP and REST requests. For the SOAP request I used a SOA-CS Helloworld web-service and for the REST request I used an Apiary mockservice. I will not go into basics too much such as creating a new Connection and using the Connection in an Integration since you can easily learn about those in other places.

Sunday, February 28, 2016

Asynchronous interaction in Oracle BPEL and BPM. WS-Addressing and Correlation sets

There are different ways to achieve asynchronous interaction in Oracle SOA Suite. In this blog article, I'll explain some differences between WS-Addressing and using correlation sets (in BPEL but also mostly valid for BPM). I'll cover topics like how to put the Service Bus between calls, possible integration patterns and technical challenges.

I will also shortly describe recovery options. You can of course depend on the fault management framework. This framework however does not catch for example a BPEL Assign activity gone wrong or a failed transformation. Developer defined error handling can sometimes leave holes if not thoroughly checked. If a process which should have performed a callback, terminates because of unexpected reasons, you might be able to manually perform recovery actions to achieve the same result as when the process was successful. This usually implies manually executing a callback to a calling service. Depending on your choice of implementation for asynchronous interaction, this callback can be easy or hard.

I will also shortly describe recovery options. You can of course depend on the fault management framework. This framework however does not catch for example a BPEL Assign activity gone wrong or a failed transformation. Developer defined error handling can sometimes leave holes if not thoroughly checked. If a process which should have performed a callback, terminates because of unexpected reasons, you might be able to manually perform recovery actions to achieve the same result as when the process was successful. This usually implies manually executing a callback to a calling service. Depending on your choice of implementation for asynchronous interaction, this callback can be easy or hard.

Monday, January 25, 2016

Service implementation patterns and performance

Performance in service oriented environments is often an issue. This is usually caused by a combination of infrastructure, configuration and service efficiency. In this blog article I provide several suggestions to improve performance by using patterns in service implementations. The patterns are described globally since implementations can differ across specific use cases. Also I provide some suggestions on things to consider when implementing such a pattern. They are technology independent however the technology does of course play a role in the implementation options you have. This blog article was inspired by a session at AMIS by Lucas Jellema and additionally flavored by personal experience.

Monday, January 4, 2016

Simple IoT security system using Raspberry Pi 2B + Razberry + Fibaro Motion Sensor (FGMS-001)

In this article I'll describe how I created a simple home-brew burglar detection system to send me a mail when someone enters my house (so I can call the police). First my choice for the components is explained. Next how these components combine to achieve the functionality wanted. Based on this article you should be able to avoid certain issues I encountered and have a nice suggestion for a simple relatively cheap burglar detection system.

My purpose was to create a simple security system based on a Raspberry Pi. A Raspberry Pi is a tiny computer which can run a Debian like Linux distribution called Rasbian. I wanted to avoid going to low-level into sensor configuration and programming. That's why I decided early on to use an extension board and not directly attach the sensors to the Raspberry Pi. I decided to go for the Razberry. I also looked at the GrovePi and Arduino. Both are still too low-level for my tastes though. The Razberry is an extension board for the Raspberry which provides a Z-Wave controller chip. Z-Wave is a wireless protocol popular in the area of home automation. This was an attractive option since if in the future I would want to use additional sensors or maybe even use a commercial home automation system, I could very well get compatibility out of the box. For the sensor, I decided on the Fibaro FGMS-001 Motion Sensor. This is a multi-sensor which allows detection of motion, temperature, luminiscence and vibrations. It can even detect tampering and earthquakes (which is relevant since I live in the Dutch city of Groningen).

Z-Wave.Me (the company providing the Razberry), provides software for the Razberry called Z-Way. There are several alternatives. One of the most popular seems to be Domoticz which is provided with OpenZWave. Domoticz allows quite extensive home automation but I was having difficulty getting the sensor to work with OpenZWave so I decided to go with Z-Way. Z-Way supported the sensor out of the box. With the Z-Way server however it was difficult to automate actions based on sensor values. How I solved this is also described in this article.

My purpose was to create a simple security system based on a Raspberry Pi. A Raspberry Pi is a tiny computer which can run a Debian like Linux distribution called Rasbian. I wanted to avoid going to low-level into sensor configuration and programming. That's why I decided early on to use an extension board and not directly attach the sensors to the Raspberry Pi. I decided to go for the Razberry. I also looked at the GrovePi and Arduino. Both are still too low-level for my tastes though. The Razberry is an extension board for the Raspberry which provides a Z-Wave controller chip. Z-Wave is a wireless protocol popular in the area of home automation. This was an attractive option since if in the future I would want to use additional sensors or maybe even use a commercial home automation system, I could very well get compatibility out of the box. For the sensor, I decided on the Fibaro FGMS-001 Motion Sensor. This is a multi-sensor which allows detection of motion, temperature, luminiscence and vibrations. It can even detect tampering and earthquakes (which is relevant since I live in the Dutch city of Groningen).

Z-Wave.Me (the company providing the Razberry), provides software for the Razberry called Z-Way. There are several alternatives. One of the most popular seems to be Domoticz which is provided with OpenZWave. Domoticz allows quite extensive home automation but I was having difficulty getting the sensor to work with OpenZWave so I decided to go with Z-Way. Z-Way supported the sensor out of the box. With the Z-Way server however it was difficult to automate actions based on sensor values. How I solved this is also described in this article.

Subscribe to:

Posts (Atom)